-

Posts

1,161 -

Joined

-

Last visited

-

Days Won

46

Content Type

Forums

Gallery

Store

Everything posted by Izen Ears

-

It wasn't set up properly, or something was out of wack. (Looking at you, single solitary hex screw that holds everything on!) There are many small parts to Cinela Pianos but it's not that hard to figure out. With my Pianos, I can slam the boom hard on the ground and fly it quickly in all directions with zero (and yes, I mean zero) handling noise. The only drawback is how long it takes to take the mic in and out.

-

Looking for client IFB basket recommendations.

Izen Ears replied to RadoStefanov's topic in Equipment

Maybe a hanging shoe rack, mounted on a stand? That could hold a ton. -

Yeah it's weird because AI would make it easy now!

-

Helios Welding Visualization System

Izen Ears replied to mono's topic in Cameras... love them, hate them

Dang! That is super cool! -

Thank you! I appreciate the info.

-

Is there a simple wiring for this?

-

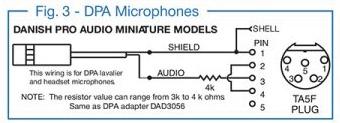

Is there a simple wiring for a 6060? All I see is this one, which says 3k - 4k. I have had clipping on yelling with both 6060s and 6061, in fact it seems there's hardly any difference between the two. But that could be because the resistors are different!

-

This is false, Rado is not being a troll. You're misusing the word. This is a "heated discussion," not one person trying to shit on everything anyone else says.

-

I'm so sorry for your loss.

-

Mystery: Why are footsteps on mic while dialogue is off mic?

Izen Ears replied to Beepy's topic in General Discussion

Oh man, why not talk to the mixer first? Seriously, before the internet, before any producers - talk to the sound dept.! I hate when a simple question goes through producers and the next thing I know, everyone's coming up to me asking a weirdly specific question that should have only been between me and post. Please dude, contact the mixer. I will say the mixer absolutely should have made clear notes. Like "footsteps on boom, dialog on wires" for example. Do you have the sound reports, and if so what do they say? Are there additional tracks with the dialog clear on wires? -

Dec 26, 2024 " The harpsichord is a musical instrument that, by combining strings and keys in a precise mechanism, achieves a characteristic sound. In 2000, in La Selva del Camp (Tarragona), Raúl Martín was one of those artisan musicians who, captured by the formal and sonorous beauty of keyboard instruments, dedicated himself to the construction of clavichords, spinets and harpsichords. " Jun 21, 2019 " In 1793, George Washington acquired a large harpsichord for his step-granddaughter, Eleanor "Nelly" Parke Custis. For over two and a half years, John Watson, Conservator of Early Keyboard Instruments, worked to create a replica of Nelly Custis's 1793 Longman & Broderip 2-Manual Harpsichord. Dr. Joyce Lindorff, Professor of Keyboard Studies at Temple University, demonstrates what makes this unique instrument so special." Feb 19, 2018 "For years, the harpsichord at Mount Vernon stood silent, rendered unplayable by age and wear. Its sound, created by a unique design that combined elements of both the classic harpsichord and the newly emerging piano, was lost to time – until now. Through the efforts of harpsichord maker John Watson and the support of Colonial Williamsburg and the curators of Mount Vernon, this piece of musical and American history is being reborn." English Double Harpsichord restoration blog episodes playlist , below (included here is the quoted accompanying descriptor text, when available from the YT page, referencing the particular episode #, posting date.) episode#1 (Sep6, 2023) "Modern pianos have actions that can be traced back to a French builder named Erard. His innovations stretch beyond double escapement action though. He also invented agraffes, the little bolts with holes through which the strings pass. Anyway, the Erard action, though tremendously expressive for pianists, is also ridiculously finicky, requiring enormous amounts of regulation and maintenance to provide the player with all responsive touch expected of a fine instrument. The Hickman action, on the other hand, eliminated most of the squishy felt and leather contact points that wear and compress with use in a conventional action, and therefore requires less than a tenth the maintenance. Most importantly for the builders, the Hickman action requires less than a quarter of the setup time at the factory. Good news for the pianist, maybe not so good news for us technicians. But wait, there's more! A lever on the side of the keyboard allows the player to adjust the touch weight. It's like choose your own adventure for the piano. Sadly, the stock market crash of 1929 happened within months of the Hickman Action's debut, and only a handful of pianos were built, even fewer of which survive." episode#2 (Sep 6, 2023) "Mid-century harpsichords were often built with a combination of old and new technology. Ada pianos evolved into their modern form with every aspect of the action adjustable, many harpsichord builders followed suit. The end result was cumbersome actions that required more maintenance and care than their elders. Thankfully, the trend is back towards more traditional techniques. Anyway, my 1974 Brad Benn English Double Harpsichord came to me with deteriorated jacks, broken and missing plectra and tongues, and the replacement parts I acquired from Brad Benn himself were old, deteriorated, and in some cases, poorly modified. The only way I could be sure of a reliable playing experience was to replace the whole action, and I taught myself CAD to do just that." episode#4 (Sep 6, 2023) "Harpsichord jacks require some accoutrements before they can be installed. The height adjustment screw goes in the bottom, though traditional harpsichords don't have them. I kept them so I wouldn't have to replace the lower register guide through which the screws fit. Up top, the tongue goes in the jack, the plectrum goes through the tongue, and the damper goes into the clip." episode#6 (Sep 6, 2023) "It's shocking how short the distance is between off and on for harpsichord registers. Harpsichords are just like organs, in that tone is altered not by how hard or soft you press the keys, but by changing registration. The register itself, which looks like a ladder with the jacks sliding up and down between the rungs, moves only about 2 millimeters away from the strings so the plectra won't reach them." episode#12 &1#3 (both Nov7,2023) "English Double Harpsichords have two sets of jacks plucking the same choir of strings. Configured as an either/or register, the player can pick between the forward 8' that sounds like any other, or the Nasale, whose plucking point is so much closer to the end of the strings' speaking length, the volume is much quieter and the tone more nasal, just like when a guitarist strums closer to the bridge. With 4 rows of jacks and 3 chairs of strings in close quarters though, getting the registers set just right so all the strings, especially in the bass, can sound without buzzing against neighboring jacks takes loads of time and patience. The most important rule to follow, is to make all the appropriate adjustments for both the forward 8' and the Nasale on the very first bass note before you proceed with adding and voicing jacks up the scale on either one. Once the lowest note can be played in all registrations on both manuals, coupled and uncoupled, without any buzzing or unwanted damping, you should be good to go. But test every note as you go to avoid headaches later." episode#15 (Nov7,2023) "I've installed all 63 jacks in the 4' choir after more than a month away, working on pianos. With the Back 8' and the 4' choirs up and running, the lower manual is done! Since this is an English Double though, I'm not 2/3 done like I would be with a Flemish, I'm only half done since the upper manual operates the forward 8' and the Nasale, which I'll restore in parallel, note by note. An observant viewer might see that the blue and red jacks for the first note are already in place. Only 62 notes, and 124 jacks remain. A harpsichord is a larger keyboard instrument where strings are plucked by a mechanism called a "jack," while a spinet is a smaller, more compact version of a harpsichord, and a clavichord is a quieter keyboard instrument that produces sound by striking the strings with a metal tangent, making it more suitable for practice or intimate playing; essentially, all three are early keyboard instruments but differ in their size and sound production mechanism, with the harpsichord being the loudest and the clavichord the softest. Key points about each instrument: • Harpsichord: ◦ Larger, grander design ◦ Strings are plucked by a "jack" mechanism ◦ Can produce a bright, resonant sound • • Spinet: ◦ Smaller, more compact version of a harpsichord ◦ Often has a smaller soundboard and shorter strings ◦ Considered a domestic instrument for home use • • Clavichord: ◦ Produces sound by striking the strings with a metal "tangent" ◦ Much softer sound than a harpsichord, suitable for quiet practice ◦ Considered a personal instrument for composing and practicing Jul 4, 2012 "Building an English Spinet and a Violin in the old way. Master Musical Instrument Maker - George Wilson. Journeymen - Mark Hansen, Larry Bowers, & Curtis Collinsworth." Whoa! That's a serious Sunday morning session to go through all that! Have you watched all of them? And read all that stuff? Thanks a lot!

-

Yamaha DM3-D 22-channel digital small format mixer with Dante

Izen Ears replied to LoganSound's topic in Equipment

Thank you all for the correction, der! -

Yamaha DM3-D 22-channel digital small format mixer with Dante

Izen Ears replied to LoganSound's topic in Equipment

Make an adaptor cable - 2x XLR4M to 1x XLR4F. Join them hot to hot and ground to ground. Then plug into two 12 volt outputs and voila, the output is 24 volts! EDIT - oops I got that backwards sorry! Thanks for the corrections! -

I do too. Humor is always at the expense of someone, by definition. It could be yourself, poor or rich people, animals, a tree, etc. If the people who are the butt of your joke have been oppressed, persecuted, or killed, your joke isn't very funny in my opinion. The economy comment was for that other user who posted that he thought the orange idiot was good for out economy, simply because they did well.

-

How Important is Copyright Protection for Creators?

Izen Ears replied to Wil Jacques's topic in General Discussion

Nice catch there Jim! -

That's a really weird thing to declare. I've had 25 mph wind and zero wind noise using a Cinela Piano. (At that point it's the wind-blowing-things noise rather than direct wind noise!) Maybe you could bury the dead cat (with honors and ceremony) and get a better system? Rolling off 80 - 90 Hz can help also. That statement of yours does not track in my world. That Kupo / Rycote boom holder design would never work for me, I like having a pin to secure rather than the shaft of the holder - the boom boy is perfect. I've used the same one for over 20 years. With the more expensive models, I visualize the whole thing falling over sideways while adjusting the angle. Maybe you all set the angle first so it wouldn't be an issue. Those photos above show a flat angle (parallel to the ground) which I do not use. I like the cradle to be higher and have the pole come down at an angle. Maybe I've been doing it wrong haha! I actually did a few sit down interviews last week and one subject accidentally kicked a leg of the stand. It was a hard kick, and luckily the person did not stumble. I use a baby digital with a 35-pound shot bag, and it didn't move at all. A lightweight light stand would have flown across the room and the pole would have fallen, and you know it woulda landed directly on the Schoeps.

-

No. That map is by area, not population. In reality there are lots of teeny tiny red dots everywhere, and big huge blue blobs where cities are. That map is highly misleading, bordering on propaganda in my opinion. Do you really think every single person in Oklahoma voted R? Anyone who says the economy was made better is delusional. Anecdotes read, to me, as this: "daddy maxed out his credit cards and then I got to spend all that money!" Trumplethinskin increased the debt by almost 8 trillion. Anyone who thinks he was good for the economy has their head deep in the sand.

-

Statements like this mean you can't possibly live on the same planet as I do.

-

I've never had a C stand fail in wind or otherwise in 21+ years, but the next step is a baby digital. Which is bigger and heavier, but more stable and it has a rocky mountain leg.

-

Simple 3D printed low profile end caps for Neutrik XX connectors

Izen Ears replied to soundmanjohn's topic in Do It Yourself

Well hot dog! I remember when libraries added computers, DVDs, and graphic novels. Now there's so much more. A music studio???!!! Dang! I'm gonna go try and print some stuff. And I wanna play with that Akai MPC. Large format printer. Double dang dude! Dan Izen -

Simple 3D printed low profile end caps for Neutrik XX connectors

Izen Ears replied to soundmanjohn's topic in Do It Yourself

What! I had no idea. -

Zeppelin and dead cat suggestions for MKH - 50

Izen Ears replied to Samuel Floyd's topic in Equipment

I hate the term "dead cat." I call it a beaver or a windjammer. The Piano's almost look like a raccoon. -

Simple 3D printed low profile end caps for Neutrik XX connectors

Izen Ears replied to soundmanjohn's topic in Do It Yourself

It looks like they might snap in? -

Simple 3D printed low profile end caps for Neutrik XX connectors

Izen Ears replied to soundmanjohn's topic in Do It Yourself

Awesome work!! It would great if this stuff was available already printed. They would absolutely sell. At this point, I think all of our production sound houses should have a 3-D printed section! I see this as a gap in our market that isn't being filled anywhere. -

YES! Thank you so much for keeping this amazing site alive. It is a rare place of real people with knowledge and opinions. I've learned so much from this place!